Бесплатный фрагмент - Artificial Intelligence Glossarium: 1000 terms

From Authosr-creators

Dear Friends and Colleagues!

The authors of this book devoted two years to the preparation and creation of this glossary (a short dictionary of specialized terms).

The “pilot” version of the book was prepared in just eight months and presented at the 35th Moscow International Book Fair in 2022.

The cover image for the book was drawn using the Easy Diffusion artificial intelligence system.

Alexander Chesalov, Alexander Vlaskin, Matvey Bakanach

Why is the book called “Glossary”?

“Glossarium” in Latin means a dictionary of highly specialized terms.

The idea of compiling “glossaries” belongs to one of the co-authors of the book – Alexander Chesalov. His first experience in this field was in compiling a glossary on artificial intelligence and information technology, which he published in December 2021. It originally had only 400 terms. Then, already in 2022, Alexander significantly expanded it to more than 1000 relevant terms and definitions. Subsequently, he published a series of books covering the topics of the fourth industrial revolution, the digital economy, the digital health and many others.

The idea of creating a large glossary on artificial intelligence was born in early 2022. The authors came to a unanimous decision to combine their efforts and their experience of recent years in the field of artificial intelligence, which was supported by several significant and fateful events.

Undoubtedly, the most significant event for us, which happened a little earlier in 2021, is participation in the Competition held by the Analytical Center under the Government of Russia to select recipients of support for research centers in the field of artificial intelligence, including in the field of “strong” artificial intelligence, systems trusted artificial intelligence and ethical aspects of the use of artificial intelligence. The authors, as specialists in the field of information technology, faced an extraordinary and still at that time unsolved task of creating a Center for the Development and Implementation of Strong and Applied Artificial Intelligence of the Moscow State Technical University. N. E. Bauman. All the authors of this book took a direct part in the development and writing of the program and action plan of the new Center. You can learn more about this story from Alexander Chesalov’s book How to Create an Artificial Intelligence Center in 100 Days.

Further, we took part in the First International Forum “Ethics of Artificial Intelligence: The Beginning of Trust”, which took place on October 26, 2021, and within the framework of which the solemn signing ceremony of the National Code of Ethics of Artificial Intelligence was organized, which establishes general ethical principles and standards of behavior that should guide the participants relations in the field of artificial intelligence in their activities. In fact, the forum became the first specialized platform in Russia, which brought together about one and a half thousand developers and users of artificial intelligence technologies.

In addition to everything, we did not pass by the AI Journey International Conference on Artificial Intelligence and Data Analysis, within which, on November 10, 2021, IT market leaders joined the signing of the National Code of Ethics for Artificial Intelligence. The number of conference speakers was amazing – there were more than two hundred of them, and the number of online visits to the site was more than forty million.

Summarizing all our active work over the past couple of years and the experience that has already been accumulated, we have come to the need to systematize the accumulated knowledge and present them in a new book that you hold in your hands.

We often hear about “Artificial Intelligence”.

But do we understand what it is?

For example, in this book we have fixed that Artificial Intelligence is a computer system based on a complex of scientific and engineering knowledge, as well as technologies for creating intelligent machines, programs, services and applications (for example, machine learning and deep learning), imitating human thought processes. or living beings, capable of perceiving information with a certain degree of autonomy, learning and making decisions based on the analysis of large amounts of data, the purpose of which is to help people solve their daily routine tasks.

One more example.

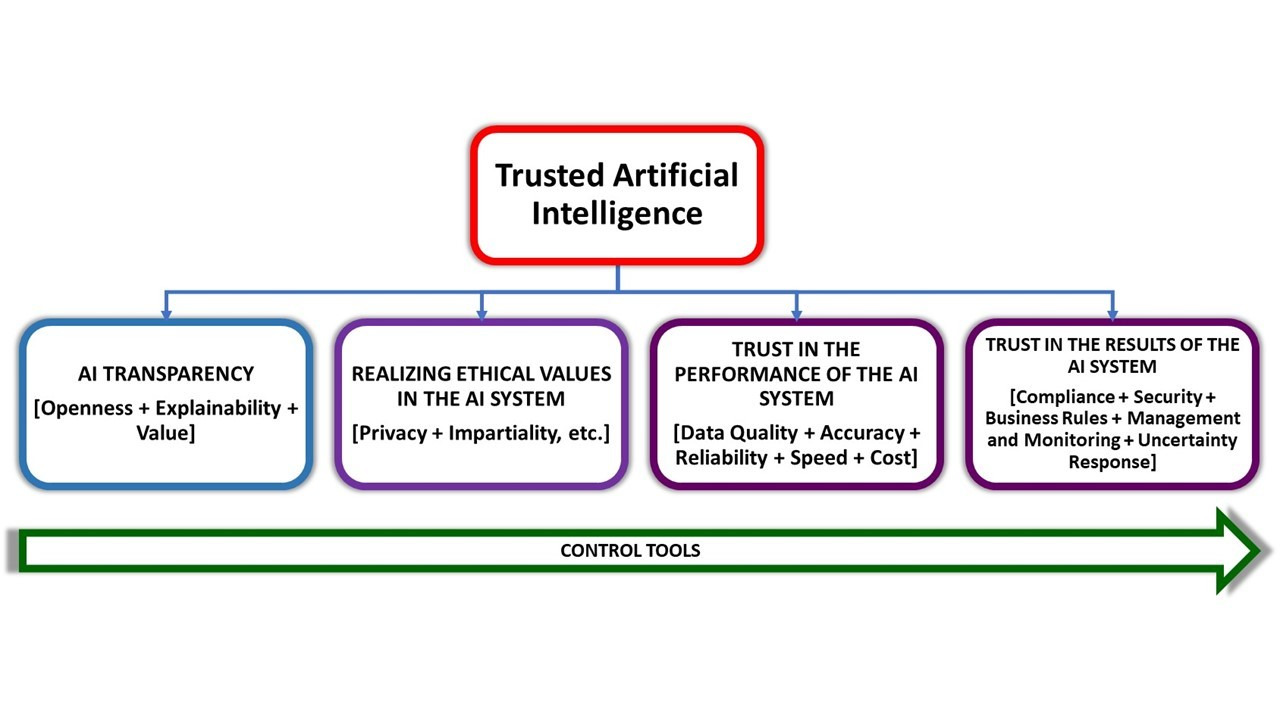

What is “Trusted Artificial Intelligence”?

A trusted artificial intelligence system is an applied artificial intelligence system that ensures the fulfillment of the tasks assigned to it, taking into account a number of additional requirements that take into account the ethical aspects of the use of artificial intelligence, which provides confidence in the results of its work, which, in turn, include: ) and interpretability of the conclusions and proposed solutions obtained using the system and verified on verified test cases; security both in terms of the impossibility of causing harm to system users throughout the entire life cycle of the system, and in terms of protection against hacking, unauthorized access and other negative external influences, privacy and verifiability of data used by artificial intelligence algorithms, including access control and other related questions.

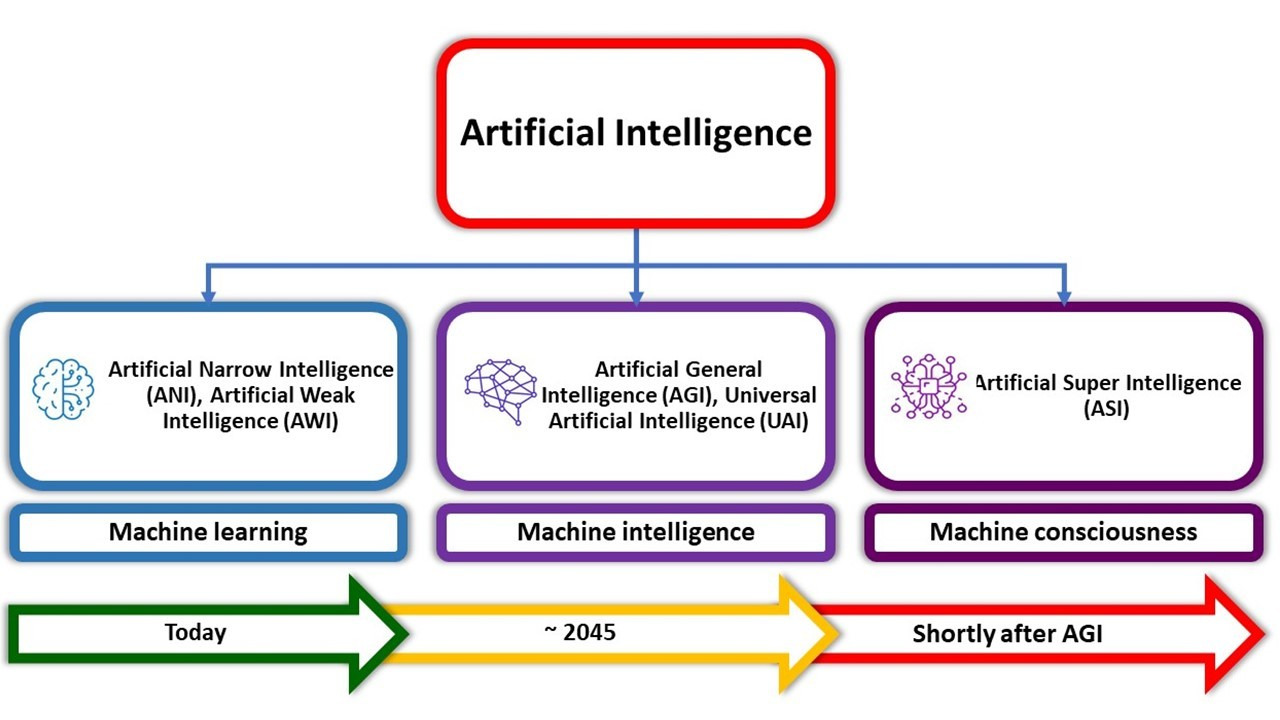

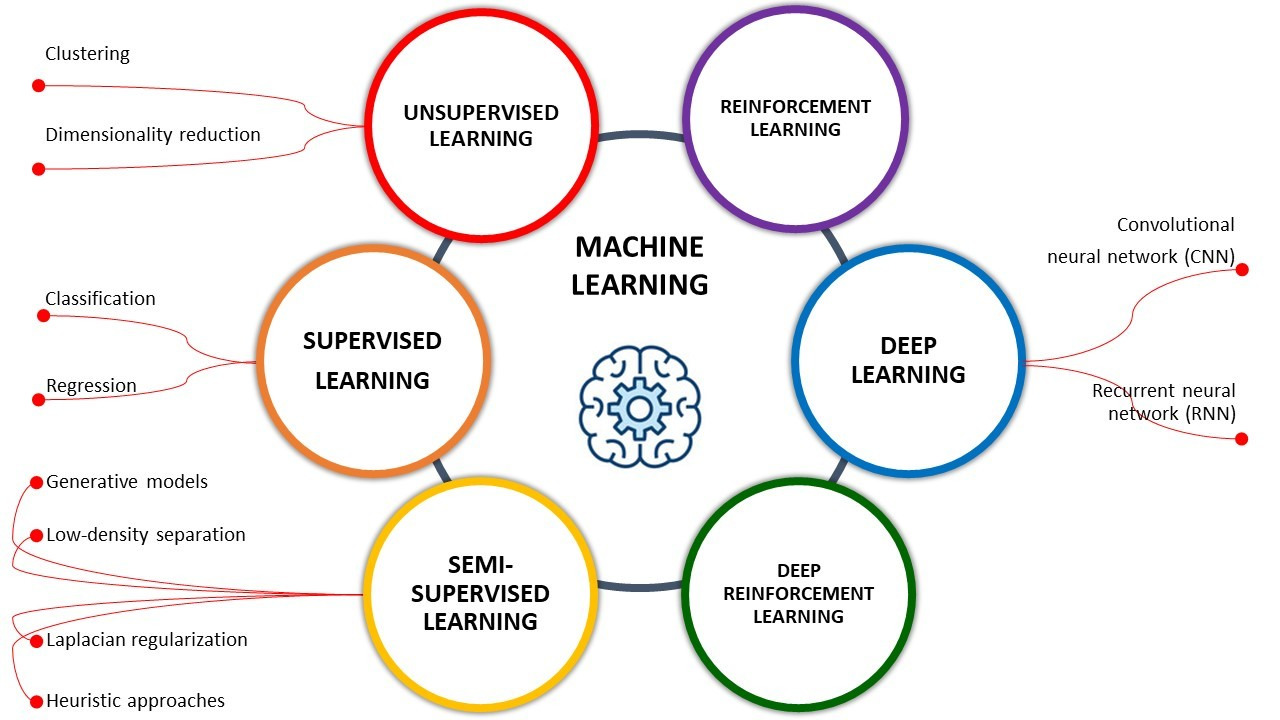

What then is “Machine Learning”?

Machine learning is one of the areas (subsets) of artificial intelligence, thanks to which the key property of intelligent computer systems is embodied – self-learning based on the analysis and processing of large heterogeneous data. The greater the amount of information and its diversity, the easier it is for artificial intelligence to find patterns and the more accurate the result will be.

To interest the dear reader, we will give a few more “funny” examples.

Have you ever heard of “Transhumanists”?

On the one hand, as an idea, Transhumanism is the empowerment of man through science. On the other hand, it is a philosophical concept and an international movement, whose adherents wish to become “post-humans” and overcome all kinds of physical limitations, illness, mental suffering, old age and death through the use of the possibilities of nano- and biotechnologies, artificial intelligence and cognitive science.

In our opinion, the ideas of “transhumanism” intersect very closely with the ideas of “digital human immortality”.

And how do you like such terms: “artificial life”, “artificial superintelligence”, “neuromorphic artificial intelligence”, “human-oriented artificial intelligence”, “synthetic intelligence”, “distributed artificial intelligence”, “friendly artificial intelligence”, “augmented artificial intelligence, composite artificial intelligence, explainable artificial intelligence, causal artificial intelligence, symbolic artificial intelligence, and many others (they are all in this book).

We can give many more such examples of “amazing” terms. But in our work, we did not waste time on the “harsh reality” and shifted the focus to a constructive and positive attitude. In short, we have done a great job for you and have collected more than 1000 terms and definitions on machine learning and artificial intelligence based on our experience and data from a huge number of different sources.

1000 terms and definitions.

Is it a lot or a little?

Our experience suggests that for mutual understanding it is enough for two interlocutors to know a dozen or a maximum of two dozen definitions, but when it comes to professional activities, it may turn out that it is not enough to know even a few dozen terms.

This book contains the most relevant terms and definitions, in our opinion, the most frequently used, both in everyday work and professional activities by specialists of various professions interested in the topic of “artificial intelligence”.

We tried very hard to make for you the necessary and useful “tool” for your work.

In conclusion, we would like to inform the reader that this book is an absolutely open and free document for distribution. If you use it in your practical work, please make a link to this book.

Many of the terms and definitions in this book are found on the Internet. They are repeated dozens or hundreds of times on various information resources. We set ourselves the goal of collecting and systematizing the most relevant of them in one place from a variety of sources.

In view of the foregoing, we do not claim the authorship of the presented terms and definitions. Nevertheless, we have made a significant contribution to the systematization and updating of many of them.

The book is written primarily for your enjoyment.

We continue to work on improving the quality and content of the text of this book, including supplementing it with new knowledge in the subject area. We would be grateful for any feedback, suggestions and clarifications.

Happy reading and productive work!

Yours, Alexander Chesalov, Alexander Vlaskin and Matvey Bakanach.

08/16/2022. First edition.

03/09/2023. Second edition. Corrected and expanded.

11/25/2023. Third edition. Corrected and expanded.

Artificial Intelligence glossary

“A”

A/B Testing is a statistical way of comparing two (or more) techniques, typically an incumbent against a new rival. A/B testing aims to determine not only which technique performs better but also to understand whether the difference is statistically significant. A/B testing usually considers only two techniques using one measurement, but it can be applied to any finite number of techniques and measures.

Abductive logic programming (ALP) is a high-level knowledge-representation framework that can be used to solve problems declaratively based on abductive reasoning. It extends normal logic programming by allowing some predicates to be incompletely defined, declared as adducible predicates.

Abductive reasoning (also abduction) is a form of logical inference which starts with an observation or set of observations then seeks to find the simplest and most likely explanation. This process, unlike deductive reasoning, yields a plausible conclusion but does not positively verify it. abductive inference, or retroduction.

Abstract data type is a mathematical model for data types, where a data type is defined by its behavior (semantics) from the point of view of a user of the data, specifically in terms of possible values, possible operations on data of this type, and the behavior of these operations.

Abstraction – the process of removing physical, spatial, or temporal details or attributes in the study of objects or systems in order to more closely attend to other details of interest.

Accelerating change is a perceived increase in the rate of technological change throughout history, which may suggest faster and more profound change in the future and may or may not be accompanied by equally profound social and cultural change.

Access to information – the ability to obtain information and use it.

Access to information constituting a commercial secret – familiarization of certain persons with information constituting a commercial secret, with the consent of its owner or on other legal grounds, provided that this information is kept confidential.

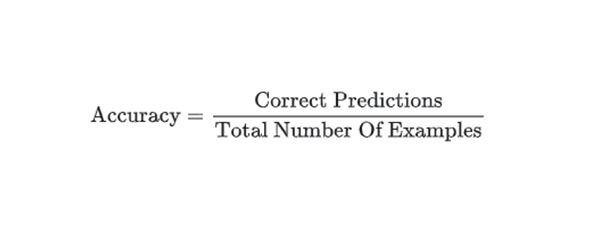

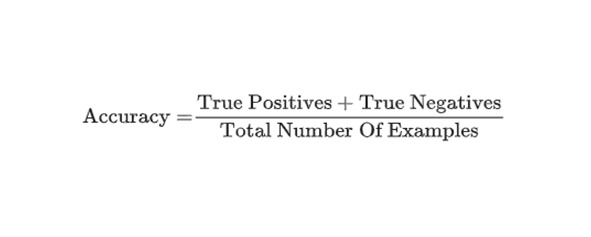

Accuracy – the fraction of predictions that a classification model got right. In multi-class classification, accuracy is defined as follows:

In binary classification, accuracy has the following definition:

See true positive and true negative. Contrast accuracy with precision and recall,.

Action in reinforcement learning, is the mechanism by which the agent transitions between states of the environment. The agent chooses the action by using a policy.

Action language is a language for specifying state transition systems, and is commonly used to create formal models of the effects of actions on the world. Action languages are commonly used in the artificial intelligence and robotics domains, where they describe how actions affect the states of systems over time, and may be used for automated planning.

Action model learning is an area of machine learning concerned with creation and modification of software agent’s knowledge about effects and preconditions of the actions that can be executed within its environment. This knowledge is usually represented in logic-based action description language and used as the input for automated planners.

Action selection is a way of characterizing the most basic problem of intelligent systems: what to do next. In artificial intelligence and computational cognitive science, “the action selection problem” is typically associated with intelligent agents and animats — artificial systems that exhibit complex behaviour in an agent environment.

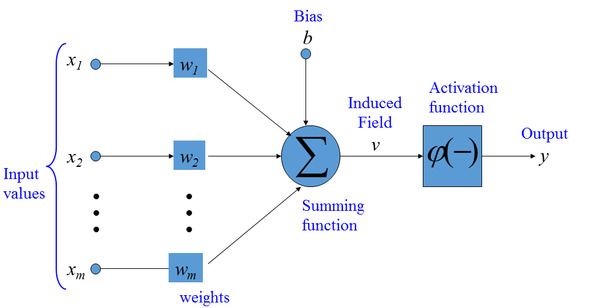

Activation function in the context of Artificial Neural Networks, is a function that takes in the weighted sum of all of the inputs from the previous layer and generates an output value to ignite the next layer.

Active Learning/Active Learning Strategy is a special case of Semi-Supervised Machine Learning in which a learning agent is able to interactively query an oracle (usually, a human annotator) to obtain labels at new data points. A training approach in which the algorithm chooses some of the data it learns from. Active learning is particularly valuable when labeled examples are scarce or expensive to obtain. Instead of blindly seeking a diverse range of labeled examples, an active learning algorithm selectively seeks the particular range of examples it needs for learning,,.

Adam optimization algorithm it is an extension of stochastic gradient descent which has recently gained wide acceptance for deep learning applications in computer vision and natural language processing.

Adaptive algorithm is an algorithm that changes its behavior at the time it is run, based on a priori defined reward mechanism or criterion,.

Adaptive Gradient Algorithm (AdaGrad) is a sophisticated gradient descent algorithm that rescales the gradients of each parameter, effectively giving each parameter an independent learning rate.

Adaptive neuro fuzzy inference system (ANFIS) (also adaptive network-based fuzzy inference system) is a kind of artificial neural network that is based on Takagi – Sugeno fuzzy inference system. The technique was developed in the early 1990s. Since it integrates both neural networks and fuzzy logic principles, it has potential to capture the benefits of both in a single framework. Its inference system corresponds to a set of fuzzy IF – THEN rules that have learning capability to approximate nonlinear functions. Hence, ANFIS is considered to be a universal estimator. For using the ANFIS in a more efficient and optimal way, one can use the best parameters obtained by genetic algorithm.

Adaptive system is a system that automatically changes the data of its functioning algorithm and (sometimes) its structure in order to maintain or achieve an optimal state when external conditions change.

Additive technologies are technologies for the layer-by-layer creation of three-dimensional objects based on their digital models (“twins”), which make it possible to manufacture products of complex geometric shapes and profiles.

Admissible heuristic in computer science, specifically in algorithms related to pathfinding, a heuristic function is said to be admissible if it never overestimates the cost of reaching the goal, i.e., the cost it estimates to reach the goal is not higher than the lowest possible cost from the current point in the path.

Affective computing (also artificial emotional intelligence or emotion AI) – the study and development of systems and devices that can recognize, interpret, process, and simulate human affects. Affective computing is an interdisciplinary field spanning computer science, psychology, and cognitive science.

Agent architecture is a blueprint for software agents and intelligent control systems, depicting the arrangement of components. The architectures implemented by intelligent agents are referred to as cognitive architectures.

Agent in reinforcement learning, is the entity that uses a policy to maximize expected return gained from transitioning between states of the environment.

Agglomerative clustering (see hierarchical clustering) is one of the clustering algorithms, first assigns every example to its own cluster, and iteratively merges the closest clusters to create a hierarchical tree.

Aggregate is a total created from smaller units. For instance, the population of a county is an aggregate of the populations of the cities, rural areas, etc., that comprise the county. To total data from smaller units into a large unit.

Aggregator is a type of software that brings together various types of Web content and provides it in an easily accessible list. Feed aggregators collect things like online articles from newspapers or digital publications, blog postings, videos, podcasts, etc. A feed aggregator is also known as a news aggregator, feed reader, content aggregator or an RSS reader.

AI acceleration – acceleration of calculations encountered with AI, specialized AI hardware accelerators are allocated for this purpose (see also artificial intelligence accelerator, hardware acceleration).

AI acceleration is the acceleration of AI-related computations, for this purpose specialized AI hardware accelerators are used.

AI accelerator is a class of microprocessor or computer system designed as hardware acceleration for artificial intelligence applications, especially artificial neural networks, machine vision, and machine learning.

AI accelerator is a specialized chip that improves the speed and efficiency of training and testing neural networks. However, for semiconductor chips, including most AI accelerators, there is a theoretical minimum power consumption limit. Reducing consumption is possible only with the transition to optical neural networks and optical accelerators for them.

AI benchmark is an AI benchmark for evaluating the capabilities, efficiency, performance and for comparing ANNs, machine learning (ML) models, architectures and algorithms when solving various AI problems, special benchmarks are created and standardized, initial marks. For example, Benchmarking Graph Neural Networks – benchmarking (benchmarking) of graph neural networks (GNS, GNN) – usually includes installing a specific benchmark, loading initial datasets, testing ANNs, adding a new dataset and repeating iterations.

AI benchmark is benchmarking of AI systems, to assess the capabilities, efficiency, performance and to compare ANNs, machine learning (ML) models, architectures and algorithms when solving various AI problems, special benchmark tests are created and standardized, benchmarks. For example, Benchmarking Graph Neural Networks – benchmarking (benchmarking) of graph neural networks (GNS, GNN) – usually includes installing a specific benchmark, loading initial datasets, testing ANNs, adding a new dataset and repeating iterations (see also artificial neural network benchmarks).

AI Building and Training Kits – applications and software development kits (SDKs) that abstract platforms, frameworks, analytics libraries, and data analysis appliances, allowing software developers to incorporate AI into new or existing applications.

AI camera is a camera with artificial intelligence, digital cameras of a new generation – allow you to analyze images by recognizing faces, their expression, object contours, textures, gradients, lighting patterns, which is taken into account when processing images; some AI cameras are capable of taking pictures on their own, without human intervention, at moments that the camera finds most interesting, etc. (see also camera, software-defined camera).

AI chipset is a chipset for systems with AI, for example, AI chipset industry is an industry of chipsets for systems with AI, AI chipset market is a market for chipsets for systems with AI.

AI chipset market – chipset market for systems with artificial intelligence (AI), see also AI chipset.

AI chipset market is the market for chipsets for artificial intelligence (AI) systems.

AI cloud services – AI model building tools, APIs, and associated middleware that enable you to build/train, deploy, and consume machine learning models that run on a prebuilt infrastructure as cloud services. These services include automated machine learning, machine vision services, and language analysis services.

AI CPU is a central processing unit for AI tasks, synonymous with AI processor.

AI engineer – AI systems engineer.

AI engineering – transfer of AI technologies from the level of R&D, experiments and prototypes to the engineering and technical level, with the expanded implementation of AI methods and tools in IT systems to solve real production problems of a company, organization. One of the strategic technological trends (trends) that can radically affect the state of the economy, production, finance, the state of the environment and, in general, the quality of life of a person and humanity.

AI hardware (also AI-enabled hardware) – artificial intelligence infrastructure system hardware, AI infrastructure. Explanations in the Glossary are usually brief.

AI hardware is infrastructure hardware or artificial intelligence system, AI infrastructure.

AI industry – for example, commercial AI industry – commercial AI industry, commercial sector of the AI industry.

AI industry trends are promising directions for the development of the AI industry.

AI infrastructure (also AI-defined infrastructure, AI-enabled Infrastructure) – artificial intelligence infrastructure systems, for example, AI infrastructure research – research in the field of AI infrastructures (see also AI, AI hardware).

AI server (artificial intelligence server) – is a server with (based on) AI; a server that provides solving AI problems.

AI shopper is a non-human economic entity that receives goods or services in exchange for payment. Examples include virtual personal assistants, smart appliances, connected cars, and IoT-enabled factory equipment. These AIs act on behalf of a human or organization client.

AI supercomputer is a supercomputer for artificial intelligence tasks, a supercomputer for AI, characterized by a focus on working with large amounts of data (see also artificial intelligence, supercomputer).

AI term is a term from the field of AI (from terminology, AI vocabulary), for example, in AI terms – in terms of AI (in AI language) (see also AI terminology).

AI term is a term from the field of AI (from terminology, AI vocabulary), for example, in AI terms – in terms of AI (in AI language).

AI terminology (artificial intelligence terminology) is a set of special terms related to the field of AI (see also AI term).

AI terminology is the terminology of artificial intelligence, a set of technical terms related to the field of AI.

AI TRiSM is the management of an AI model to ensure trust, fairness, efficiency, security, and data protection.

AI vendor is a supplier of AI tools (systems, solutions).

AI vendor is a supplier of AI tools (systems, solutions).

AI winter (Winter of artificial intelligence) is a period of reduced interest in the subject area, reduced research funding. The term was coined by analogy with the idea of nuclear winter. The field of artificial intelligence has gone through several cycles of hype, followed by disappointment and criticism, followed by a strong cooling off of interest, and then followed by renewed interest years or decades later,.

AI workstation is a workstation (PC) with (based on) AI; AI RS, a specialized computer for solving technical or scientific problems, AI tasks; usually connected to a LAN with multi-user operating systems, intended primarily for the individual work of one specialist.

AI workstation is a workstation (PC) with means (based on) AI; AI PC, a specialized desktop PC for solving technical or scientific problems, AI tasks; usually connected to a LAN with multi-user operating systems, intended primarily for the individual work of one specialist.

AI-based management system – the process of creating policies, allocating decision-making rights and ensuring organizational responsibility for risk and investment decisions for an application, as well as using artificial intelligence methods.

AI-based systems are information processing technologies that include models and algorithms that provide the ability to learn and perform cognitive tasks, with results in the form of predictive assessment and decision making in a material and virtual environment. AI systems are designed to work with some degree of autonomy through modeling and representation of knowledge, as well as the use of data and the calculation of correlations. AI-based systems can use various methodologies, in particular: machine learning, including deep learning and reinforcement learning; automated reasoning, including planning, dispatching, knowledge representation and reasoning, search and optimization. AI-based systems can be used in cyber-physical systems, including equipment control systems via the Internet, robotic equipment, social robotics and human-machine interface systems that combine the functions of control, recognition, processing of data collected by sensors, as well as the operation of actuators in the environment of functioning of AI systems.

AI-complete. In the field of artificial intelligence, the most difficult problems are informally known as AI-complete or AI-hard, implying that the difficulty of these computational problems is equivalent to that of solving the central artificial intelligence problem — making computers as intelligent as people, or strong AI. To call a problem AI-complete reflects an attitude that it would not be solved by a simple specific algorithm.

AI-enabled – using AI and equipped with AI, for example, AI-enabled tools – tools with AI (see also AI-enabled device).

AI-enabled device is a device supported by an artificial intelligence (AI) system, such as an intelligent robot.

AI-enabled device is a device supported by an artificial intelligence (AI) system, such as an intelligent robot (see also AI-enabled healthcare device).

AI-enabled healthcare device is an AI-enabled device for healthcare (medical care), see also AI-enabled device.

AI-enabled healthcare device is an AI-enabled healthcare device.

AI-enabled is hardware or software that uses AI-enabled AI, such as AI-enabled tools.

AI-optimized – optimized for AI tasks; AI-optimized chip, for example, an AI-optimized chip is a chip optimized for AI tasks (see also artificial intelligence).

AI-optimized is one that is optimized for AI tasks or optimized using AI tools, for example, an AI-optimized chip is a chip that is optimized for AI tasks.

AlexNet – the name of a neural network that won the ImageNet Large Scale Visual Recognition Challenge in 2012. It is named after Alex Krizhevsky, then a computer science PhD student at Stanford University. See ImageNet,.

Algorithm – an exact prescription for the execution in a certain order of a system of operations for solving any problem from some given class (set) of problems. The term “algorithm” comes from the name of the Uzbek mathematician Musa Al-Khorezmi, who in the 9th century proposed the simplest arithmetic algorithms. In mathematics and cybernetics, a class of problems of a certain type is considered solved when an algorithm is established to solve it. Finding algorithms is a natural human goal in solving various classes of problems.

Algorithmic Assessment is a technical evaluation that helps identify and address potential risks and unintended consequences of AI systems across your business, to engender trust and build supportive systems around AI decision making.

AlphaGo is the first computer program that defeated a professional player on the board game Go in October 2015. Later in October 2017, AlphaGo’s team released its new version named AlphaGo Zero which is stronger than any previous human-champion defeating versions. Go is played on 19 by 19 board which allows for 10171 possible layouts (chess 1050 configurations). It is estimated that there are 1080 atoms in the universe.

Ambient intelligence (AmI) represents the future vision of intelligent computing where explicit input and output devices will not be required; instead, sensors and processors will be embedded into everyday devices and the environment will adapt to the user’s needs and desires seamlessly. AmI systems, will use the contextual information gathered through these embedded sensors and apply Artificial Intelligence (AI) techniques to interpret and anticipate the users’ needs. The technology will be designed to be human centric and easy to use.

Analogical Reasoning – solving problems by using analogies, by comparing to past experiences.

Analysis of algorithms (AofA) – the determination of the computational complexity of algorithms, that is the amount of time, storage and/or other resources necessary to execute them. Usually, this involves determining a function that relates the length of an algorithm’s input to the number of steps it takes (its time complexity) or the number of storage locations it uses (its space complexity).

Annotation is a metadatum attached to a piece of data, typically provided by a human annotator.

Anokhin’s theory of functional systems is a functional system consists of a certain number of nodal mechanisms, each of which takes its place and has a certain specific purpose. The first of these is afferent synthesis, in which four obligatory components are distinguished: dominant motivation, situational and triggering afferentation, and memory. The interaction of these components leads to the decision-making process.

Anomaly detection – the process of identifying outliers. For example, if the mean for a certain feature is 100 with a standard deviation of 10, then anomaly detection should flag a value of 200 as suspicious,.

Anonymization – the process in which data is de-identified as part of a mechanism to submit data for machine learning.

Answer set programming (ASP) is a form of declarative programming oriented towards difficult (primarily NP-hard) search problems. It is based on the stable model (answer set) semantics of logic programming. In ASP, search problems are reduced to computing stable models, and answer set solvers — programs for generating stable models — are used to perform search.

Antivirus software is a program or set of programs that are designed to prevent, search for, detect, and remove software viruses, and other malicious software like worms, trojans, adware, and more.

Anytime algorithm is an algorithm that can return a valid solution to a problem even if it is interrupted before it ends.

API-AS-a-service (AaaS) combines the API economy and software renting and provides application programming interfaces as a service.

Application programming interface (API) is a set of subroutine definitions, communication protocols, and tools for building software. In general terms, it is a set of clearly defined methods of communication among various components. A good API makes it easier to develop a computer program by providing all the building blocks, which are then put together by the programmer. An API may be for a web-based system, operating system, database system, computer hardware, or software library.

Application security is the process of making apps more secure by finding, fixing, and enhancing the security of apps. Much of this happens during the development phase, but it includes tools and methods to protect apps once they are deployed. This is becoming more important as hackers increasingly target applications with their attacks.

Application-specific integrated circuit (ASIC) is a specialized integrated circuit for solving a specific problem.

Approximate string matching (also fuzzy string searching) – the technique of finding strings that match a pattern approximately (rather than exactly). The problem of approximate string matching is typically divided into two sub-problems: finding approximate substring matches inside a given string and finding dictionary strings that match the pattern approximately.

Approximation error – the discrepancy between an exact value and some approximation to it.

Architectural description group (Architectural view) is a representation of the system as a whole in terms of a related set of interests,.

Architectural frameworks are high-level descriptions of an organization as a system; they capture the structure of its main components at varied levels, the interrelationships among these components, and the principles that guide their evolution.

Architecture of a computer is a conceptual structure of a computer that determines the processing of information and includes methods for converting information into data and the principles of interaction between hardware and software.

Architecture of a computing system is the configuration, composition and principles of interaction (including data exchange) of the elements of a computing system.

Architecture of a system is the fundamental organization of a system, embodied in its elements, their relationships with each other and with the environment, as well as the principles that guide its design and evolution.

Archival Information Collection (AIC) is information whose content is an aggregation of other archive information packages. The digital preservation function preserves the capability to regenerate the DIPs (Dissemination Information Packages) as needed over time.

Archival Storage is a source for data that is not needed for an organization’s everyday operations, but may have to be accessed occasionally. By utilizing an archival storage, organizations can leverage to secondary sources, while still maintaining the protection of the data. Utilizing archival storage sources reduces primary storage costs required and allows an organization to maintain data that may be required for regulatory or other requirements.

Area under curve (AUC) – the area under a curve between two points is calculated by performing the definite integral. In the context of a receiver operating characteristic for a binary classifier, the AUC represents the classifier’s accuracy.

Area Under the ROC curve is the probability that a classifier will be more confident that a randomly chosen positive example is actually positive than that a randomly chosen negative example is positive.

Argumentation framework is a way to deal with contentious information and draw conclusions from it. In an abstract argumentation framework, entry-level information is a set of abstract arguments that, for instance, represent data or a proposition. Conflicts between arguments are represented by a binary relation on the set of arguments.

Artifact is one of many kinds of tangible by-products produced during the development of software. Some artifacts (e.g., use cases, class diagrams, and other Unified Modeling Language (UML) models, requirements and design documents) help describe the function, architecture, and design of software. Other artifacts are concerned with the process of development itself — such as project plans, business cases, and risk assessments.

Artificial General Intelligence (AGI) as opposed to narrow intelligence, also known as complete, strong, super intelligence, Human Level Machine Intelligence, indicates the ability of a machine that can successfully perform any tasks in an intellectual way as the human being. Artificial superintelligence is a term referring to the time when the capability of computers will surpass humans,.

Artificial Intelligence (AI) – (machine intelligence) refers to systems that display intelligent behavior by analyzing their environment and taking actions — with some degree of autonomy — to achieve specific goals. AI-based systems can be purely software-based, acting in the virtual world (e.g., voice assistants, image analysis software, search engines, speech and face recognition systems) or AI can be embedded in hardware devices (e.g., advanced robots, autonomous cars, drones, or Internet of Things applications). The term AI was first coined by John McCarthy in 1956.

Artificial Intelligence Automation Platforms – platforms that enable the automation and scaling of production-ready AI. Artificial Intelligence Platforms involves the use of machines to perform the tasks that are performed by human beings. The platforms simulate the cognitive function that human minds perform such as problem-solving, learning, reasoning, social intelligence as well as general intelligence. Top Artificial Intelligence Platforms: Google AI Platform, TensorFlow, Microsoft Azure, Rainbird, Infosys Nia, Wipro HOLMES, Dialogflow, Premonition, Ayasdi, MindMeld, Meya, KAI, Vital A.I, Wit, Receptiviti, Watson Studio, Lumiata, Infrrd.

Artificial intelligence engine (also AI engine, AIE) is an artificial intelligence engine, a hardware and software solution for increasing the speed and efficiency of artificial intelligence system tools.

Artificial Intelligence for IT Operations (AIOps) is an emerging IT practice that applies artificial intelligence to IT operations to help organizations intelligently manage infrastructure, networks, and applications for performance, resilience, capacity, uptime, and, in some cases, security. By shifting traditional, threshold-based alerts and manual processes to systems that take advantage of AI and machine learning, AIOps enables organizations to better monitor IT assets and anticipate negative incidents and impacts before they take hold. AIOps is a term coined by Gartner in 2016 as an industry category for machine learning analytics technology that enhances IT operations analytics covering operational tasks include automation, performance monitoring and event correlations, among others. Gartner define an AIOps Platform thus: “An AIOps platform combines big data and machine learning functionality to support all primary IT operations functions through the scalable ingestion and analysis of the ever-increasing volume, variety and velocity of data generated by IT. The platform enables the concurrent use of multiple data sources, data collection methods, and analytical and presentation technologies”,, .

Artificial Intelligence Markup Language (AIML) is an XML dialect for creating natural language software agents.

Artificial Intelligence of the Commonsense knowledge is one of the areas of development of artificial intelligence, which is engaged in modeling the ability of a person to analyze various life situations and be guided in his actions by common sense.

Artificial Intelligence Open Library is a set of algorithms designed to develop technological solutions based on artificial intelligence, described using programming languages and posted on the Internet.

Artificial intelligence system (AIS) is a programmed or digital mathematical model (implemented using computer computing systems) of human intellectual capabilities, the main purpose of which is to search, analyze and synthesize large amounts of data from the world around us in order to obtain new knowledge about it and solve them. basis of various vital tasks. The discipline “Artificial Intelligence Systems” includes consideration of the main issues of modern theory and practice of building intelligent systems.

Artificial intelligence technologies – technologies based on the use of artificial intelligence, including computer vision, natural language processing, speech recognition and synthesis, intelligent decision support and advanced methods of artificial intelligence.

Artificial life (Alife, A-Life) is a field of study wherein researchers examine systems related to natural life, its processes, and its evolution, through the use of simulations with computer models, robotics, and biochemistry. The discipline was named by Christopher Langton, an American theoretical biologist, in 1986. In 1987 Langton organized the first conference on the field, in Los Alamos, New Mexico. There are three main kinds of alife, named for their approaches: soft, from software; hard, from hardware; and wet, from biochemistry. Artificial life researchers study traditional biology by trying to recreate aspects of biological phenomena.

Artificial Narrow Intelligence (ANI), also known as weak or applied intelligence, represents most of the current artificial intelligent systems which usually focus on a specific task. Narrow AIs are mostly much better than humans at the task they were made for: for example, look at face recognition, chess computers, calculus, and translation. The definition of artificial narrow intelligence is in contrast to that of strong AI or artificial general intelligence, which aims at providing a system with consciousness or the ability to solve any problems. Virtual assistants and AlphaGo are examples of artificial narrow intelligence systems.

Artificial Neural Network (ANN) is a computational model in machine learning, which is inspired by the biological structures and functions of the mammalian brain. Such a model consists of multiple units called artificial neurons which build connections between each other to pass information. The advantage of such a model is that it progressively “learns” the tasks from the given data without specific programing for a single task.

Artificial neuron is a mathematical function conceived as a model of biological neurons, a neural network. The difference between an artificial neuron and a biological neuron is shown in the figure. Artificial neurons are the elementary units of an artificial neural network. An artificial neuron receives one or more inputs (representing excitatory postsynaptic potentials and inhibitory postsynaptic potentials on nerve dendrites) and sums them to produce an output signal (or activation, representing the action potential of the neuron that is transmitted down its axon). Typically, each input is weighted separately, and the sum is passed through a non-linear function known as an activation function or transfer function. Transfer functions are usually sigmoid, but they can also take the form of other non-linear functions, piecewise linear functions, or step functions. They are also often monotonically increasing, continuous, differentiable, and bounded,.

Artificial Superintelligence (ASI) is a term referring to the time when the capability of computers will surpass humans. “Artificial intelligence,” which has been much used since the 1970s, refers to the ability of computers to mimic human thought. Artificial superintelligence goes a step beyond and posits a world in which a computer’s cognitive ability is superior to a human’s.

Assistive intelligence is AI-based systems that help make decisions or perform actions.

Association for the Advancement of Artificial Intelligence (AAAI) is an international, nonprofit, scientific society devoted to promote research in, and responsible use of, artificial intelligence. AAAI also aims to increase public understanding of artificial intelligence (AI), improve the teaching and training of AI practitioners, and provide guidance for research planners and funders concerning the importance and potential of current AI developments and future directions.

Association is another type of unsupervised learning method that uses different rules to find relationships between variables in a given dataset. These methods are frequently used for market basket analysis and recommendation engines, along the lines of “Customers Who Bought This Item Also Bought” recommendations.

Association Rule Learning is a rule-based Machine Learning method for discovering interesting relations between variables in large data sets.

Asymptotic computational complexity in computational complexity theory, asymptotic computational complexity is the usage of asymptotic analysis for the estimation of computational complexity of algorithms and computational problems, commonly associated with the usage of the big O notation.

Asynchronous inter-chip protocols are protocols for data exchange in low-speed devices; instead of frames, individual characters are used to control the exchange of data.

Attention mechanism is one of the key innovations in the field of neural machine translation. Attention allowed neural machine translation models to outperform classical machine translation systems based on phrase translation. The main bottleneck in sequence-to-sequence learning is that the entire content of the original sequence needs to be compressed into a vector of a fixed size. The attention mechanism facilitates this task by allowing the decoder to look back at the hidden states of the original sequence, which are then provided as a weighted average as additional input to the decoder.

Attributional calculus (AC) is a logic and representation system defined by Ryszard S. Michalski. It combines elements of predicate logic, propositional calculus, and multi-valued logic. Attributional calculus provides a formal language for natural induction, an inductive learning process whose results are in forms natural to people.

Augmented Intelligence is the intersection of machine learning and advanced applications, where clinical knowledge and medical data converge on a single platform. The potential benefits of Augmented Intelligence are realized when it is used in the context of workflows and systems that healthcare practitioners operate and interact with. Unlike Artificial Intelligence, which tries to replicate human intelligence, Augmented Intelligence works with and amplifies human intelligence.

Augmented reality (AR) is an interactive experience of a real-world environment where the objects that reside in the real-world are “augmented” by computer-generated perceptual information, sometimes across multiple sensory modalities, including visual, auditory, haptic, somatosensory, and olfactory.

Augmented reality technologies are visualization technologies based on adding information or visual effects to the physical world by overlaying graphic and/or sound content to improve user experience and interactive features.

Auto Associative Memory is a single layer neural network in which the input training vector and the output target vectors are the same. The weights are determined so that the network stores a set of patterns. As shown in the following figure, the architecture of Auto Associative memory network has ‘n’ number of input training vectors and similar ‘n’ number of output target vectors.

Autoencoder (AE) is a type of Artificial Neural Network used to produce efficient representations of data in an unsupervised and non-linear manner, typically to reduce dimensionality.

Automata theory – the study of abstract machines and automata, as well as the computational problems that can be solved using them. It is a theory in theoretical computer science and discrete mathematics (a subject of study in both mathematics and computer science). Automata theory (part of the theory of computation) is a theoretical branch of Computer Science and Mathematics, which mainly deals with the logic of computation with respect to simple machines, referred to as automata,.

Automated control system – a set of software and hardware designed to control technological and (or) production equipment (executive devices) and the processes they produce, as well as to control such equipment and processes.

Automated planning and scheduling (also simply AI planning) is a branch of artificial intelligence that concerns the realization of strategies or action sequences, typically for execution by intelligent agents, autonomous robots and unmanned vehicles. Unlike classical control and classification problems, the solutions are complex and must be discovered and optimized in multidimensional space. Planning is also related to decision theory.

Automated processing of personal data – processing of personal data using computer technology.

Automated reasoning is an area of computer science and mathematical logic dedicated to understanding different aspects of reasoning. The study of automated reasoning helps produce computer programs that allow computers to reason completely, or nearly completely, automatically. Although automated reasoning is considered a sub-field of artificial intelligence, it also has connections with theoretical computer science, and even philosophy.

Automated system is an organizational and technical system that guarantees the development of solutions based on the automation of information processes in various fields of activity.

Automation bias is when a human decision maker favors recommendations made by an automated decision-making system over information made without automation, even when the automated decision-making system makes errors.

Automation is a technology by which a process or procedure is performed with minimal human intervention.

Autonomic computing is the ability of a system to adaptively self-manage its own resources for high-level computing functions without user input.

Autonomous artificial intelligence is a biologically inspired system that tries to reproduce the structure of the brain, the principles of its operation with all the properties that follow from this,.

Autonomous car (also self-driving car, robot car, and driverless car) is a vehicle that is capable of sensing its environment and moving with little or no human input.

Autonomous is a machine is described as autonomous if it can perform its task or tasks without needing human intervention.

Autonomous robot is a robot that performs behaviors or tasks with a high degree of autonomy. Autonomous robotics is usually considered to be a subfield of artificial intelligence, robotics, and information engineering.

Autonomous vehicle is a mode of transport based on an autonomous driving system. The control of an autonomous vehicle is fully automated and carried out without a driver using optical sensors, radar and computer algorithms.

Autoregressive Model is an autoregressive model is a time series model that uses observations from previous time steps as input to a regression equation to predict the value at the next time step. In statistics and signal processing, an autoregressive model is a representation of a type of random process. It is used to describe certain time-varying processes in nature, economics, etc..

Auxiliary intelligence – systems based on artificial intelligence that complement human decisions and are able to learn in the process of interacting with people and the environment.

Average precision is a metric for summarizing the performance of a ranked sequence of results. Average precision is calculated by taking the average of the precision values for each relevant result (each result in the ranked list where the recall increases relative to the previous result).

Ayasdi is an enterprise scale machine intelligence platform that delivers the automation that is needed to gain competitive advantage from the company’s big and complex data. Ayasdi supports large numbers of business analysts, data scientists, endusers, developers and operational systems across the organization, simultaneously creating, validating, using and deploying sophisticated analyses and mathematical models at scale.

Backpropagation through time (BPTT) is a gradient-based technique for training certain types of recurrent neural networks. It can be used to train Elman networks. The algorithm was independently derived by numerous researchers.

“В”

Backpropagation, also called “backward propagation of errors,” is an approach that is commonly used in the training process of the deep neural network to reduce errors.

Backward Chaining, also called goal-driven inference technique, is an inference approach that reasons backward from the goal to the conditions used to get the goal. Backward chaining inference is applied in many different fields, including game theory, automated theorem proving, and artificial intelligence.

Bag-of-words model in computer vision. In computer vision, the bag-of-words model (BoW model) can be applied to image classification, by treating image features as words. In document classification, a bag of words is a sparse vector of occurrence counts of words; that is, a sparse histogram over the vocabulary. In computer vision, a bag of visual words is a vector of occurrence counts of a vocabulary of local image features.

Bag-of-words model is a simplifying representation used in natural language processing and information retrieval (IR). In this model, a text (such as a sentence or a document) is represented as the bag (multiset) of its words, disregarding grammar and even word order but keeping multiplicity. The bag-of-words model has also been used for computer vision. The bag-of-words model is commonly used in methods of document classification where the (frequency of) occurrence of each word is used as a feature for training a classifier.

Baldwin effect – the skills acquired by organisms during their life as a result of learning, after a certain number of generations, are recorded in the genome.

Baseline is a model used as a reference point for comparing how well another model (typically, a more complex one) is performing. For example, a logistic regression model might serve as a good baseline for a deep model. For a particular problem, the baseline helps model developers quantify the minimal expected performance that a new model must achieve for the new model to be useful.

Batch – the set of examples used in one gradient update of model training.

Batch Normalization is a preprocessing step where the data are centered around zero, and often the standard deviation is set to unity.

Batch size – the number of examples in a batch. For example, the batch size of SGD is 1, while the batch size of a mini-batch is usually between 10 and 1000. Batch size is usually fixed during training and inference; however, TensorFlow does permit dynamic batch sizes,.

Bayes’s Theorem is a famous theorem used by statisticians to describe the probability of an event based on prior knowledge of conditions that might be related to an occurrence.

Bayesian classifier in machine learning is a family of simple probabilistic classifiers based on the use of the Bayes theorem and the “naive” assumption of the independence of the features of the objects being classified.

Bayesian Filter is a program using Bayesian logic. It is used to evaluate the header and content of email messages and determine whether or not it constitutes spam— unsolicited email or the electronic equivalent of hard copy bulk mail or junk mail. A Bayesian filter works with probabilities of specific words appearing in the header or content of an email. Certain words indicate a high probability that the email is spam, such as Viagra and refinance.

Bayesian Network, also called Bayes Network, belief network, or probabilistic directed acyclic graphical model, is a probabilistic graphical model (a statistical model) that represents a set of variables and their conditional dependencies via a directed acyclic graph.

Bayesian optimization is a probabilistic regression model technique for optimizing computationally expensive objective functions by instead optimizing a surrogate that quantifies the uncertainty via a Bayesian learning technique. Since Bayesian optimization is itself very expensive, it is usually used to optimize expensive-to-evaluate tasks that have a small number of parameters, such as selecting hyperparameters.

Bayesian programming is a formalism and a methodology for having a technique to specify probabilistic models and solve problems when less than the necessary information is available,.

Bees’ algorithm is a population-based search algorithm which was developed by Pham, Ghanbarzadeh and et al. in 2005. It mimics the food foraging behaviour of honey bee colonies. In its basic version the algorithm performs a kind of neighbourhood search combined with global search, and can be used for both combinatorial optimization and continuous optimization. The only condition for the application of the bee’s algorithm is that some measure of distance between the solutions is defined. The effectiveness and specific abilities of the bee’s algorithm have been proven in a number of studies.

Behavior informatics (BI) – the informatics of behaviors so as to obtain behavior intelligence and behavior insights.

Behavior tree (BT) is a mathematical model of plan execution used in computer science, robotics, control systems and video games. They describe switchings between a finite set of tasks in a modular fashion. Their strength comes from their ability to create very complex tasks composed of simple tasks, without worrying how the simple tasks are implemented. BTs present some similarities to hierarchical state machines with the key difference that the main building block of a behavior is a task rather than a state. Its ease of human understanding makes BTs less error-prone and very popular in the game developer community. BTs have shown to generalize several other control architectures.

Belief-desire-intention software model (BDI) is a software model developed for programming intelligent agents. Superficially characterized by the implementation of an agent’s beliefs, desires and intentions, it actually uses these concepts to solve a particular problem in agent programming. In essence, it provides a mechanism for separating the activity of selecting a plan (from a plan library or an external planner application) from the execution of currently active plans. Consequently, BDI agents are able to balance the time spent on deliberating about plans (choosing what to do) and executing those plans (doing it). A third activity, creating the plans in the first place (planning), is not within the scope of the model, and is left to the system designer and programmer.

Bellman equation – named after Richard E. Bellman, is a necessary condition for optimality associated with the mathematical optimization method known as dynamic programming. It writes the “value” of a decision problem at a certain point in time in terms of the payoff from some initial choices and the “value” of the remaining decision problem that results from those initial choices. This breaks a dynamic optimization problem into a sequence of simpler subproblems, as Bellman’s “principle of optimality” prescribes.

Benchmark (also benchmark program, benchmarking program, benchmark test) – test program or package for evaluating (measuring and/or comparing) various aspects of the performance of a processor, individual devices, computer, system or a specific application, software; a benchmark that allows products from different manufacturers to be compared against each other or against some standard. For example, online benchmark – online benchmark; standard benchmark – standard benchmark; benchmark time comparison – comparison of benchmark execution times.

Benchmarking is a set of techniques that allow you to study the experience of competitors and implement best practices in your company.

BETA refers to a phase in online service development in which the service is coming together functionality-wise but genuine user experiences are required before the service can be finished in a user-centered way. In online service development, the aim of the beta phase is to recognize both programming issues and usability-enhancing procedures. The beta phase is particularly often used in connection with online services and it can be either freely available (open beta) or restricted to a specific target group (closed beta).

Bias is a systematic trend that causes differences between results and facts. Error exists in the numbers of the data analysis process, including the source of the data, the estimate chosen, and how the data is analyzed. Error can seriously affect the results, for example, when studying people’s shopping habits. If the sample size is not large enough, the results may not reflect the buying habits of all people. That is, there may be discrepancies between survey results and actual results.

Biased algorithm – systematic and repetitive errors in a computer system that lead to unfair results, such as one privilege persecuting groups of users over others. Also, sexist and racist algorithms,.

Bidirectional (BiDi) is a term used to describe a system that evaluates the text that both precedes and follows a target section of text. In contrast, a unidirectional system only evaluates the text that precedes a target section of text.

Bidirectional Encoder Representations from Transformers (BERT) is a model architecture for text representation. A trained BERT model can act as part of a larger model for text classification or other ML tasks. BERT has the following characteristics: Uses the Transformer architecture, and therefore relies on self-attention. Uses the encoder part of the Transformer. The encoder’s job is to produce good text representations, rather than to perform a specific task like classification. Is bidirectional. Uses masking for unsupervised training,.

Bidirectional language model is a language model that determines the probability that a given token is present at a given location in an excerpt of text based on the preceding and following text.

Big data is a term for sets of digital data whose large size, rate of increase or complexity requires significant computing power for processing and special software tools for analysis and presentation in the form of human-perceptible results.

Big O notation is a mathematical notation that describes the limiting behavior of a function when the argument tends towards a particular value or infinity. It is a member of a family of notations invented by Paul Bachmann, Edmund Landau, and others, collectively called Bachmann – Landau notation or asymptotic notation.

Bigram – an N-grams in which N=2.

Binary choice regression model is a regression model in which the dependent variable is dichotomous or binary. Dependent variable can take only two values and mean, for example, belonging to a particular group.

Binary classification is a type of classification task that outputs one of two mutually exclusive classes. For example, a machine learning model that evaluates email messages and outputs either “spam” or “not spam” is a binary classifier.

Binary format is any file format in which information is encoded in some format other than a standard character-encoding scheme. A file written in binary format contains information that is not displayable as characters. Software capable of understanding the particular binary format method of encoding information must be used to interpret the information in a binary-formatted file. Binary formats are often used to store more information in less space than possible in a character format file. They can also be searched and analyzed more quickly by appropriate software. A file written in binary format could store the number “7” as a binary number (instead of as a character) in as little as 3 bits (i.e., 111), but would more typically use 4 bits (i.e., 0111). Binary formats are not normally portable, however. Software program files are written in binary format. Examples of numeric data files distributed in binary format include the IBM-binary versions of the Center for Research in Security Prices files and the U.S. Department of Commerce’s National Trade Data Bank on CD-ROM. The International Monetary Fund distributes International Financial Statistics in a mixed-character format and binary (packed-decimal) format. SAS and SPSS store their system files in binary format.

Binary number is a number written using binary notation which only uses zeros and ones. Example: Decimal number 7 in binary notation is: 111.

Binary tree is a tree data structure in which each node has at most two children, which are referred to as the left child and the right child. A recursive definition using just set theory notions is that a (non-empty) binary tree is a tuple (L, S, R), where L and R are binary trees or the empty set and S is a singleton set. Some authors allow the binary tree to be the empty set as well.

Binning is the process of combining charge from neighboring pixels in a CCD during readout. This process is performed prior to digitization in the CCD chip using dedicated serial and parallel register control. The two main benefits of binning are improved signal-to-noise ratio (SNR) and the ability to increase frame rates, albeit at the cost of reduced spatial resolution.

Bioconservatism (a portmanteau of biology and conservatism) is a stance of hesitancy and skepticism regarding radical technological advances, especially those that seek to modify or enhance the human condition. Bioconservatism is characterized by a belief that technological trends in today’s society risk compromising human dignity, and by opposition to movements and technologies including transhumanism, human genetic modification, “strong” artificial intelligence, and the technological singularity. Many bioconservatives also oppose the use of technologies such as life extension and preimplantation genetic screening,.

Biometrics is a people recognition system, one or more physical or behavioral traits,.

Black box is a description of some deep learning system. They take an input and provide an output, but the calculations that occur in between are not easy for humans to interpret,.

Blackboard system is an artificial intelligence approach based on the blackboard architectural model, where a common knowledge base, the “blackboard”, is iteratively updated by a diverse group of specialist knowledge sources, starting with a problem specification and ending with a solution. Each knowledge source updates the blackboard with a partial solution when its internal constraints match the blackboard state. In this way, the specialists work together to solve the problem.

BLEU (Bilingual Evaluation Understudy) is a text quality evaluation algorithm between 0.0 and 1.0, inclusive, indicating the quality of a translation between two human languages (for example, between English and Russian). A BLEU score of 1.0 indicates a perfect translation; a BLEU score of 0.0 indicates a terrible translation.

Blockchain is algorithms and protocols for decentralized storage and processing of transactions structured as a sequence of linked blocks without the possibility of their subsequent change.

Boltzmann machine (also stochastic Hopfield network with hidden units) is a type of stochastic recurrent neural network and Markov random field. Boltzmann machines can be seen as the stochastic, generative counterpart of Hopfield networks.

Boolean neural network is an artificial neural network approach which only consists of Boolean neurons (and, or, not). Such an approach reduces the use of memory space and computation time. It can be implemented to the programmable circuits such as FPGA (Field-Programmable Gate Array or Integrated circuit).

Boolean satisfiability problem (also propositional satisfiability problem; abbreviated SATISFIABILITY or SAT) is the problem of determining if there exists an interpretation that satisfies a given Boolean formula. In other words, it asks whether the variables of a given Boolean formula can be consistently replaced by the values TRUE or FALSE in such a way that the formula evaluates to TRUE. If this is the case, the formula is called satisfiable. On the other hand, if no such assignment exists, the function expressed by the formula is FALSE for all possible variable assignments and the formula is unsatisfiable.

Boosting is a Machine Learning ensemble meta-algorithm for primarily reducing bias and variance in supervised learning, and a family of Machine Learning algorithms that convert weak learners to strong ones.

Bounding Box commonly used in image or video tagging; this is an imaginary box drawn on visual information. The contents of the box are labeled to help a model recognize it as a distinct type of object.

Brain technology (also self-learning know-how system) is a technology that employs the latest findings in neuroscience. The term was first introduced by the Artificial Intelligence Laboratory in Zurich, Switzerland, in the context of the ROBOY project. Brain Technology can be employed in robots, know-how management systems and any other application with self-learning capabilities. In particular, Brain Technology applications allow the visualization of the underlying learning architecture often coined as “know-how maps”.

Brain – computer interface (BCI), sometimes called a brain – machine interface (BMI), is a direct communication pathway between the brain’s electrical activity and an external device, most commonly a computer or robotic limb. Research on brain – computer interface began in the 1970s by Jacques Vidal at the University of California, Los Angeles (UCLA) under a grant from the National Science Foundation, followed by a contract from DARPA. The Vidal’s 1973 paper marks the first appearance of the expression brain – computer interface in scientific literature.

Brain-inspired computing – calculations on brain-like structures, brain-like calculations using the principles of the brain (see also neurocomputing, neuromorphic engineering).

Branching factor in computing, tree data structures, and game theory, the number of children at each node, the outdegree. If this value is not uniform, an average branching factor can be calculated,.

Broadband refers to various high-capacity transmission technologies that transmit data, voice, and video across long distances and at high speeds. Common mediums of transmission include coaxial cables, fiber optic cables, and radio waves.

Brute-force search (also exhaustive search or generate and test) is a very general problem-solving technique and algorithmic paradigm that consists of systematically enumerating all possible candidates for the solution and checking whether each candidate satisfies the problem’s statement.

Bucketing – converting a (usually continuous) feature into multiple binary features called buckets or bins, typically based on value range.

Byte – eight bits. A byte is simply a chunk of 8 ones and zeros. For example: 01000001 is a byte. A computer often works with groups of bits rather than individual bits and the smallest group of bits that a computer usually works with is a byte. A byte is equal to one column in a file written in character format.

“С”

CAFFE is short for Convolutional Architecture for Fast Feature Embedding which is an open-source deep learning framework de- veloped in Berkeley AI Research. It supports many different deep learning architectures and GPU-based acceleration computation kernels,.

Calibration layer is a post-prediction adjustment, typically to account for prediction bias. The adjusted predictions and probabilities should match the distribution of an observed set of labels.

Candidate generation – the initial set of recommendations chosen by a recommendation system.

Candidate sampling is a training-time optimization in which a probability is calculated for all the positive labels, using, for example, softmax, but only for a random sample of negative labels. For example, if we have an example labeled beagle and dog candidate sampling computes the predicted probabilities and corresponding loss terms for the beagle and dog class outputs in addition to a random subset of the remaining classes (cat, lollipop, fence). The idea is that the negative classes can learn from less frequent negative reinforcement as long as positive classes always get proper positive reinforcement, and this is indeed observed empirically. The motivation for candidate sampling is a computational efficiency win from not computing predictions for all negatives.

Canonical Formats in information technology, canonicalization is the process of making something conform] with some specification… and is in an approved format. Canonicalization may sometimes mean generating canonical data from noncanonical data. Canonical formats are widely supported and considered to be optimal for long-term preservation.

Capsule neural network (CapsNet) is a machine learning system that is a type of artificial neural network (ANN) that can be used to better model hierarchical relationships. The approach is an attempt to more closely mimic biological neural organization,.

Case-Based Reasoning (CBR) is a way to solve a new problem by using solutions to similar problems. It has been formalized to a process consisting of case retrieve, solution reuse, solution revise, and case retention.

Categorical data – features having a discrete set of possible values. For example, consider a categorical feature named house style, which has a discrete set of three possible values: Tudor, ranch, colonial. By representing house style as categorical data, the model can learn the separate impacts of Tudor, ranch, and colonial on house price. Sometimes, values in the discrete set are mutually exclusive, and only one value can be applied to a given example. For example, a car maker categorical feature would probably permit only a single value (Toyota) per example. Other times, more than one value may be applicable. A single car could be painted more than one different color, so a car color categorical feature would likely permit a single example to have multiple values (for example, red and white). Categorical features are sometimes called discrete features. Contrast with numerical data.

Center for Technological Competence is an organization that owns the results, tools for conducting fundamental research and platform solutions available to market participants to create applied solutions (products) on their basis. The Technology Competence Center can be a separate organization or be part of an application technology holding company.

Central Processing Unit (CPU) is a von Neumann cyclic processor designed to execute complex computer programs.

Centralized control is a process in which control signals are generated in a single control center and transmitted from it to numerous control objects.

Centroid – the center of a cluster as determined by a k-means or k-median algorithm. For instance, if k is 3, then the k-means or k-median algorithm finds 3 centroids.

Centroid-based clustering is a category of clustering algorithms that organizes data into nonhierarchical clusters. k-means is the most widely used centroid-based clustering algorithm. Contrast with hierarchical clustering algorithms.

Character format is any file format in which information is encoded as characters using only a standard character-encoding scheme. A file written in “character format” contains only those bytes that are prescribed in the encoding scheme as corresponding to the characters in the scheme (e.g., alphabetic and numeric characters, punctuation marks, and spaces).

Сhatbot is a software application designed to simulate human conversation with users via text or speech. Also referred to as virtual agents, interactive agents, digital assistants, or conversational AI, chatbots are often integrated into applications, websites, or messaging platforms to provide support to users without the use of live human agents. Chatbots originally started out by offering users simple menus of choices, and then evolved to react to particular keywords. “But humans are very inventive in their use of language,” says Forrester’s McKeon-White. Someone looking for a password reset might say they’ve forgotten their access code, or are having problems getting into their account. “There are a lot of different ways to say the same thing,” he says. This is where AI comes in. Natural language processing is a subset of machine learning that enables a system to understand the meaning of written or even spoken language, even where there is a lot of variation in the phrasing. To succeed, a chatbot that relies on AI or machine learning needs first to be trained using a data set. In general, the bigger the training data set, and the narrower the domain, the more accurate and helpful a chatbot will be.

Checkpoint – data that captures the state of the variables of a model at a particular time. Checkpoints enable exporting model weights, as well as performing training across multiple sessions. Checkpoints also enable training to continue past errors (for example, job preemption). Note that the graph itself is not included in a checkpoint.

Chip is an electronic microcircuit of arbitrary complexity, made on a semiconductor substrate and placed in a non-separable case or without it, if included in the micro assembly,.

Class – one of a set of enumerated target values for a label. For example, in a binary classification model that detects spam, the two classes are spam and not spam. In a multi-class classification model that identifies dog breeds, the classes would be poodle, beagle, pug, and so on.

Classification model is a type of machine learning model for distinguishing among two or more discrete classes. For example, a natural language processing classification model could determine whether an input sentence was in French, Spanish, or Italian.

Classification threshold is a scalar-value criterion that is applied to a model’s predicted score in order to separate the positive class from the negative class. Used when mapping logistic regression results to binary classification.

Classification. Classification problems use an algorithm to accurately assign test data into specific categories, such as separating apples from oranges. Or, in the real world, supervised learning algorithms can be used to classify spam in a separate folder from your inbox. Linear classifiers, support vector machines, decision trees and random forest are all common types of classification algorithms.